Guide to Load Data from Azure Cloud to Snowflake

Last updated: October 2024

Quick answer: To load data from Azure Blob Storage into Snowflake, create a storage integration using CREATE STORAGE INTEGRATION with your Azure tenant ID and container URL, define an external stage pointing to the blob path, create a file format (CSV/Parquet), then run COPY INTO to load the data into your target Snowflake table.

Introduction

Loading data from Azure Blob Storage to Snowflake is a core ETL task for data engineers working with Azure and Snowflake cloud integration. For a secure private connection between Azure and Snowflake, see our Snowflake Azure PrivateLink implementation guide. This step-by-step guide walks you through the complete process of configuring Azure Blob Storage integration with Snowflake, creating external stages, and using COPY INTO commands to transfer CSV data from Azure to Snowflake tables.

Pre-requisites

- A Snowflake account with appropriate permissions to create databases, stages, and tables.

- An Azure account with a Blob Storage container containing the data (e.g., students_dataset_50.csv).

- Azure tenant ID and Blob Storage URL for integration setup.

- Basic familiarity with Snowflake’s web interface

- An Azure account with an active subscription.

- Access to the Azure Portal (`portal.azure.com`).

- Basic familiarity with cloud interfaces.

Procedure

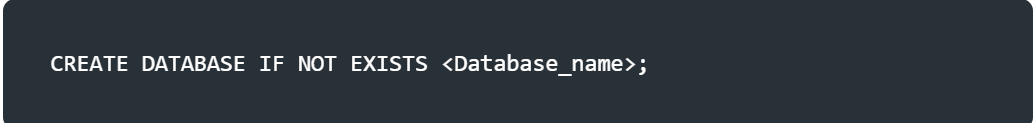

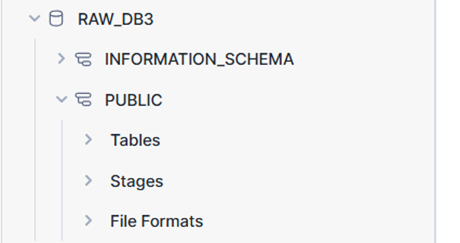

Step 1: Create a Database

First, create a database in Snowflake to store your data. The following command creates a database named raw_azure_db if it doesn’t already exist.

Use default schema or create a separate schema using. Here I’m using the default schema “PUBLIC” as my schema.

Procedure to create azure account:

Step 1.1: Sign In to the Azure Portal

- Go to [portal.azure.com].

- Sign in with your Azure account credentials.

- - If you’re new, follow the prompts to set up a free trail account.

Step 1.2: Create a Resource Group

A resource group is a logical container for Azure resources. Let’s create one for our storage account.

- 1. In the Azure Portal, click Create a resource (top-left corner) or search for Resource groups.

- Click Create.

- Fill in the details:

- Subscription: Select your Azure subscription. - Resource group: Enter a name (e.g., myazure ). - Region: Choose a region (e.g., East US). - Click Review + create, then Create.

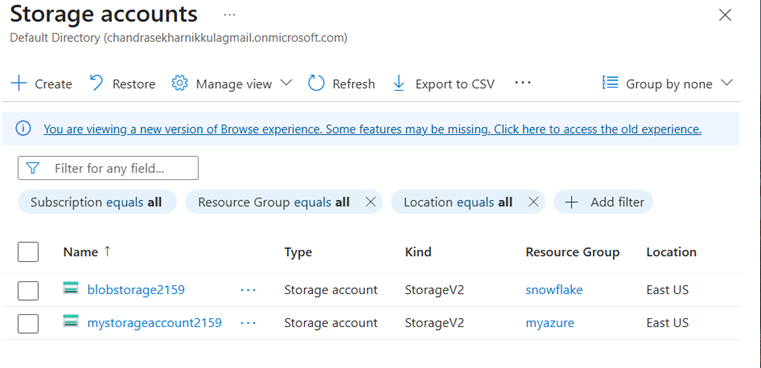

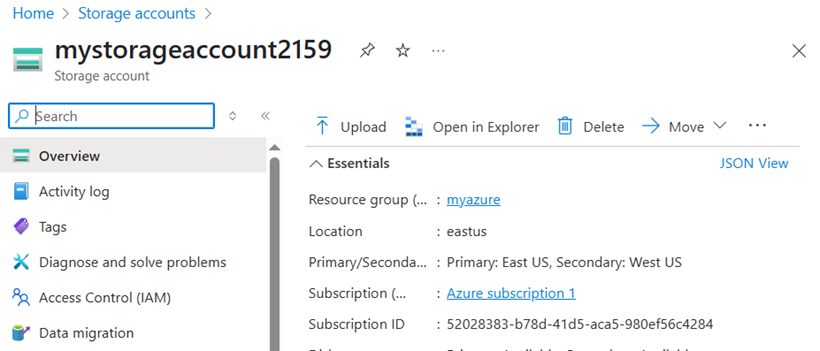

Step 1.3: Create a Storage Account

The storage account provides a unique namespace for your Blob Storage data.

- In the Azure Portal, search for Storage accounts and select it.

- Click Create.

-

Fill in the details:

-Subscription: Select your subscription.

-Resource group: Select created group name (e.g: ‘myazure’)

-Storage account name: Enter a unique name (e.g., mystorageaccount2159).Must be lowercase, 3–24 characters, letters, and numbers only.

-Region: Choose the same region as your resource group (e.g., East US).

-Performance: Select Standard (sufficient for most use cases).

-Redundancy: Choose Locally-redundant storage (LRS) for cost-efficiency.

- Click Review + create, then Create.

- Once created, click Go to resource to view the storage account.

common Name: mystorageaccount2159 (unique, descriptive, and aligns with your blobstorage2159 example).

Step 1.4: upload the data(azure):

Go to storage accounts And click refresh and click the created storage account and click the upload button on top.then browse the files and uploaded

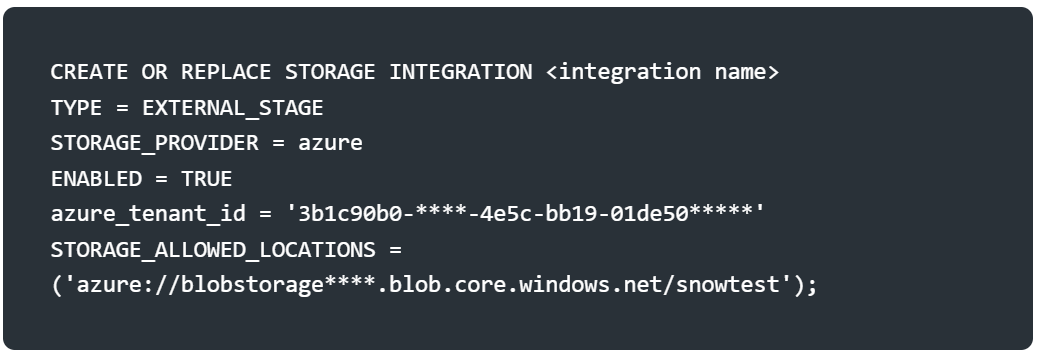

Step 2: Create a Storage Integration

To connect Snowflake with Azure Blob Storage, create a storage integration. This defines the connection parameters, including the Azure tenant ID and allowed storage locations.

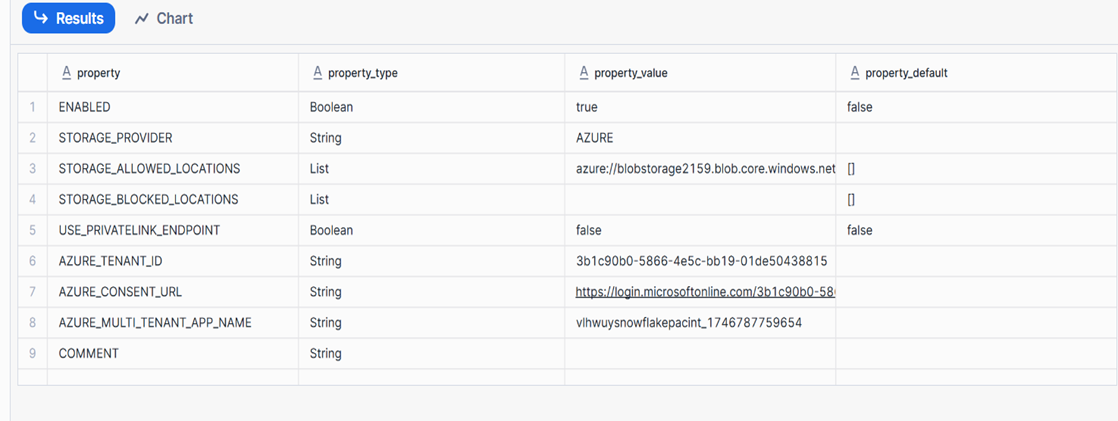

After creating the integration, describe it to verify the setup:

Authorize Snowflake Using AZURE_CONSENT_URL

After creating the storage integration, you need to authorize Snowflake to access your Azure Blob Storage. Here’s how

Copy the azure_content_url from the output

- Access the URL : Paste the URL into a browser and sign in with an Azure account that has admin privileges in your tenant.

- Grant Permissions : Review the permissions requested by Snowflake (e.g., access to your Blob Storage). Click Accept to authorize

Note: Ensure the Azure tenant ID and storage URL match your Azure account details. You may need to grant Snowflake access to the Azure storage account via role-based access control (RBAC).

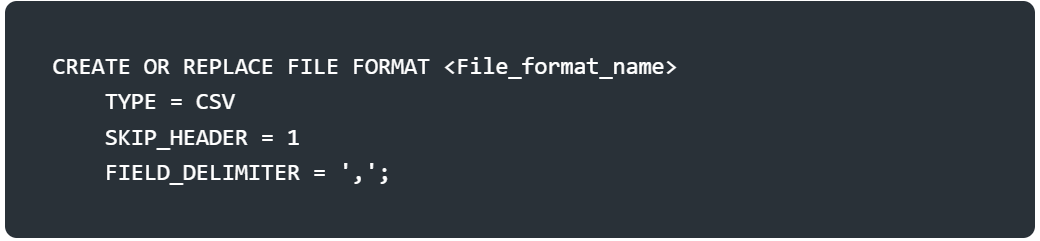

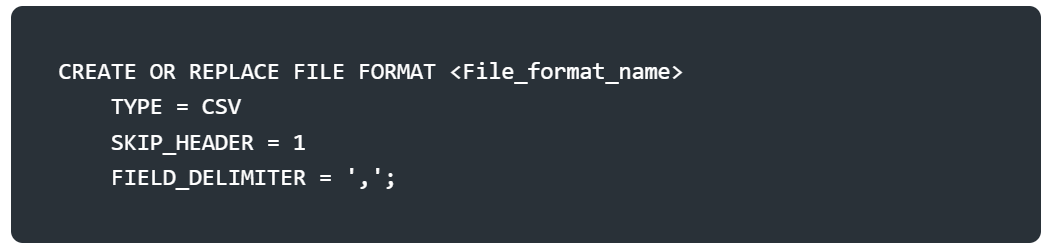

Step 3: Create a File Format

Define a file format to specify how Snowflake should parse the CSV file. Here, we create a CSV file format that skips the header row and uses a comma as the delimiter.

Adjust the SKIP_HEADER or FIELD_DELIMITER based on your CSV file’s structure.

Adjust the SKIP_HEADER or FIELD_DELIMITER based on your CSV file’s structure.

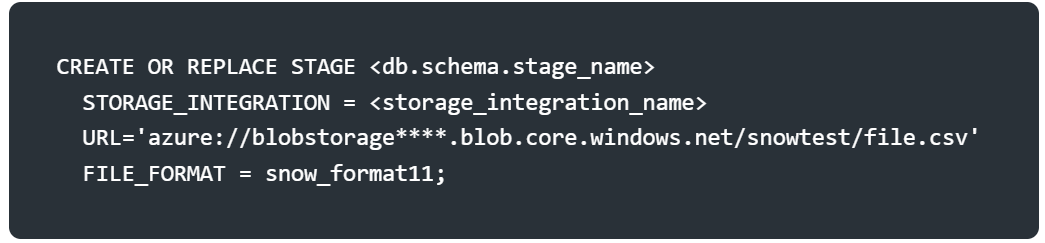

Step 4: Create a Stage

A stage in Snowflake is a reference to the external storage location (Azure Blob Storage). The stage links to the storage integration and specifies the file format.

Step 5: Verify Stage Contents

Preview the data in the stage to confirm the file is accessible and formatted correctly.

This query displays the raw contents of the CSV file as a single column. Check for any parsing issues here.

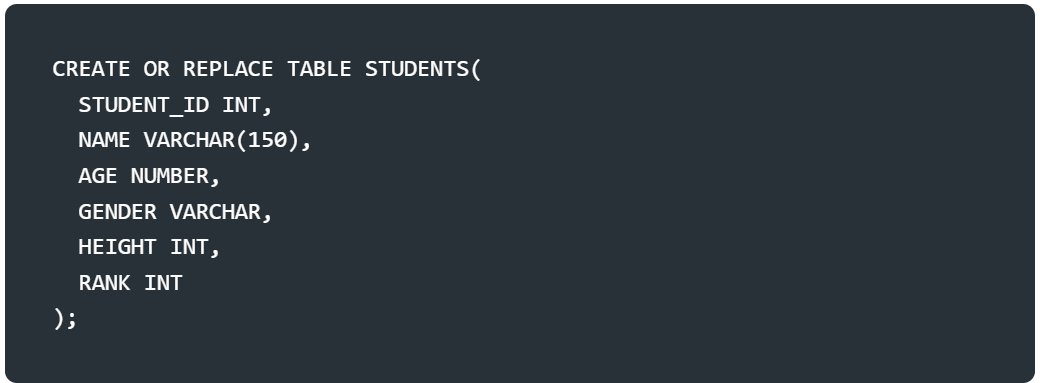

Step 6: Create a Target Table

Create a table in Snowflake to store the data from the CSV file. The table’s schema should match the CSV file’s structure.

Ensure the column names and data types align with the CSV file’s columns.

Step 7: Load Data into the Table

Use the COPY INTO command to load the data from the Azure stage into the STUDENTS2 table. The ON_ERROR = CONTINUE option ensures the process continues even if some rows fail to load.

Step 8: Verify the Loaded Data

Query the table to confirm the data was loaded successfully.

Conclusion

You have now loaded a CSV file from Azure Blob Storage into a Snowflake table. The process involves setting up a database, connecting Snowflake to Azure via a storage integration, defining a file format, staging the data, and loading it into a table. If you need to load data from your local machine instead, check out our guide on connecting Snowflake with SnowSQL. For loading JSON or semi-structured data from AWS S3, see our article on loading semi-structured data into Snowflake. If you encounter issues, verify the Azure URL, file format, and column mappings. For large datasets, consider Snowflake’s parallel loading capabilities to optimize performance.

Frequently Asked Questions

Q: How do I load data from Azure to Snowflake?

To load data from Azure to Snowflake, create an Azure storage integration in Snowflake, set up an external stage pointing to your Azure Blob Storage, define a file format, and use the COPY INTO command to load the data into your Snowflake tables.

Q: What Azure storage types does Snowflake support?

Snowflake supports loading data from Azure Blob Storage and Azure Data Lake Storage (ADLS) Gen2. You can configure storage integrations to securely connect Snowflake to these Azure storage services.

Q: Do I need a storage integration for Azure to Snowflake data loading?

While you can use SAS tokens directly, creating a storage integration is the recommended approach. It provides a more secure and manageable way to connect Snowflake to Azure storage, avoiding the need to embed credentials in SQL statements.

Still have questions?

Get AssistanceReady? Let's Talk!

Get expert insights and answers tailored to your business requirements and transformation.

Get Assistance