Loading Semi-Structured Data from AWS S3

Last updated: October 2024

Quick answer: To load semi-structured JSON data from AWS S3 into Snowflake, create a storage integration with your S3 IAM role, define an external stage pointing to the S3 bucket, set a JSON file format, then use COPY INTO with a VARIANT column to ingest raw JSON. You can then flatten nested JSON using Snowflake’s LATERAL FLATTEN function into structured tables.

Introduction

Loading semi-structured JSON data from AWS S3 into Snowflake is a common data engineering task that Snowflake handles natively with its VARIANT data type. If you need to set up IAM roles for S3 access, refer to our guide on streamlining IAM role creation for S3 bucket access. This step-by-step guide covers the complete S3-to-Snowflake ingestion workflow: creating a storage integration, configuring external stages, parsing JSON with Snowflake’s semi-structured data functions, and loading into both VARIANT and structured relational tables.

Pre-requisites

Before we start, ensure you have:

- A snowflake account with a role that has permissions to create databases, schemas, and stages.

- An AWS S3 bucket with JSON files.

- An AWS IAM role with access to the S3 bucket.

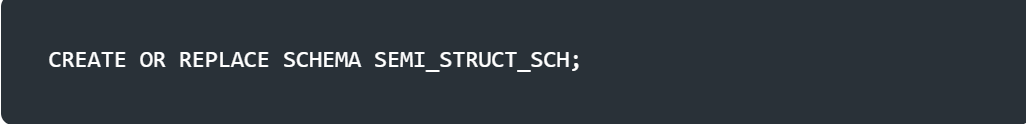

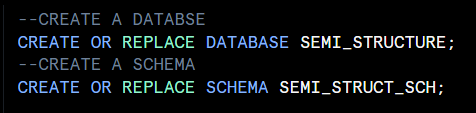

Step 1: Set up your Snowflake Environment

First, let’s create a database and schema to organize our work.

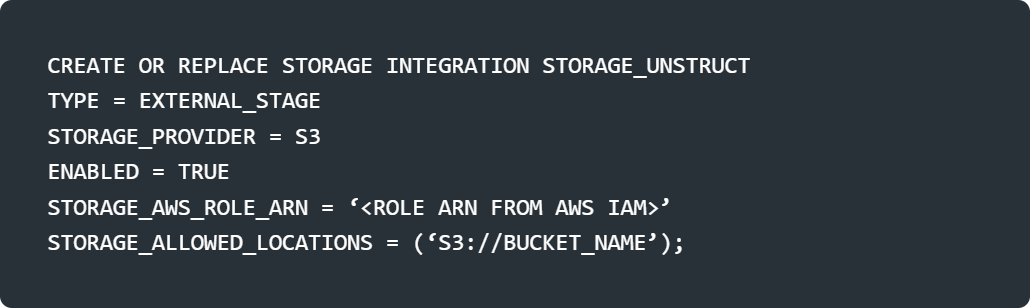

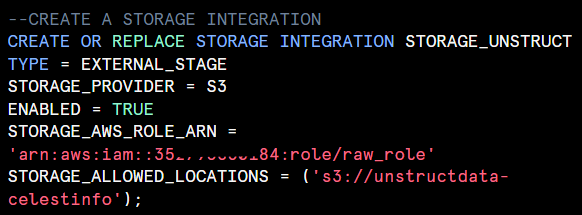

Step 2: Connect Snowflake to AWS S3

To access files in S3, we need a storage integration to securely link Snowflake with your S3 bucket.

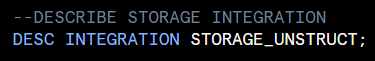

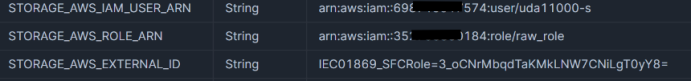

After creating storage integration, update the value of ‘STORAGE_AWS_EXTERNAL_ID’ and ‘STORAGE_AWS_IAM_USER_ARN’.

Copy the values from the output, and update the trust policy in AWS IAM role. If you cant understand this step please go through our previous blog.

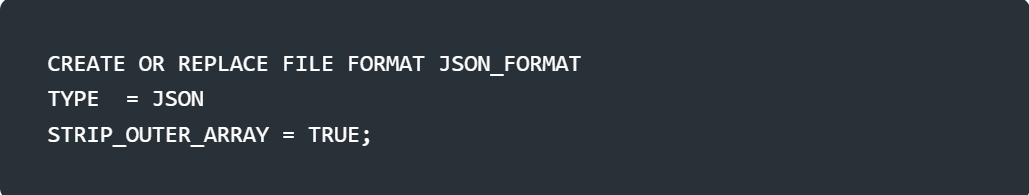

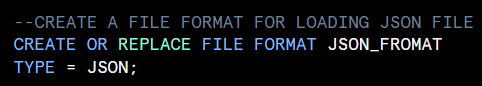

Step 3: Define a JSON File Format

Snowflake needs to know how to parse the JSON files. We’ll create a file format for JSON.

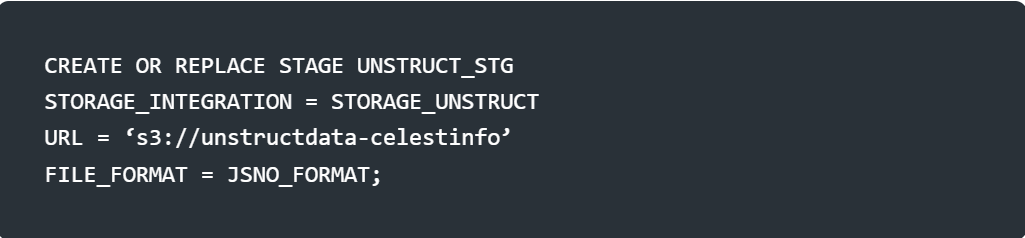

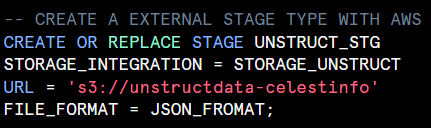

Step 4: Create an External Stage

An external stage points to your S3 bucket, linking it to Snowflake via the storage integration.

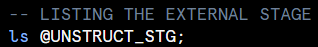

After creating the external stage, check the external stage has connected correctly or not. Just by listing, what file are there in the stage.

Step 5: Load JSON into an Unstructured Table

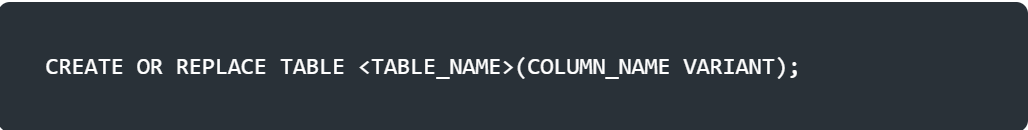

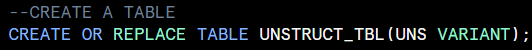

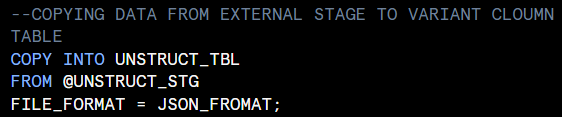

Let’s create a table with a VARIANT column to store the raw JSON data.

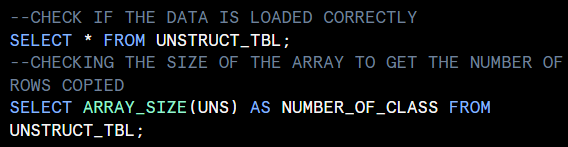

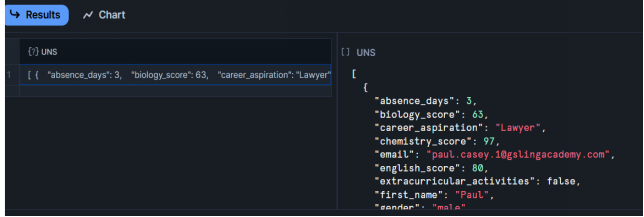

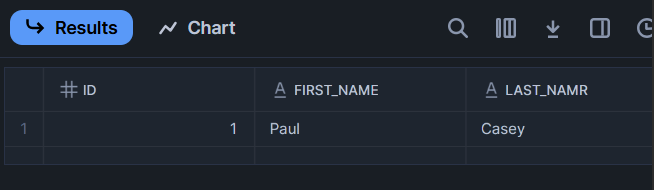

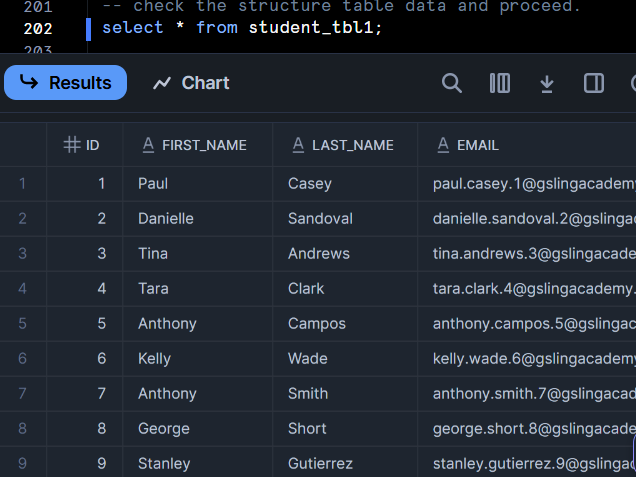

After copying the data from external stage, check if the data is loaded correctly.

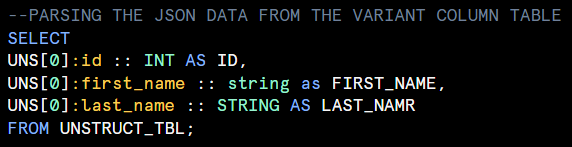

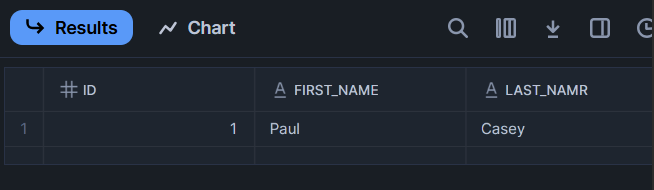

Step 6: Parse JSON Data

Now, let’s query and parse the JSON data in UNSTRUCT_TBL. We’ll use Snowflake’s JSON parsing capabilities.

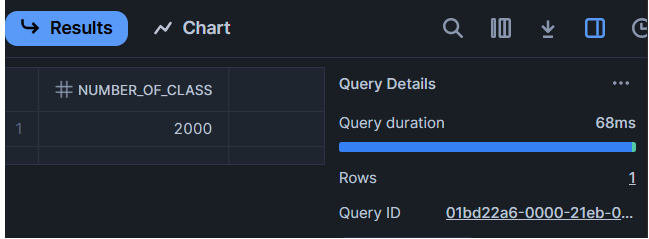

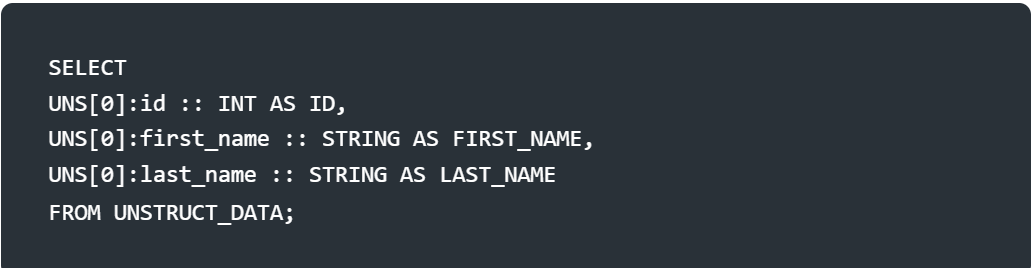

Simple Parsing with DOT Notation

If the JSON is a single object per row (not an array), use dot notation:

Note : while parsing, write the column names properly they are case sensitive.

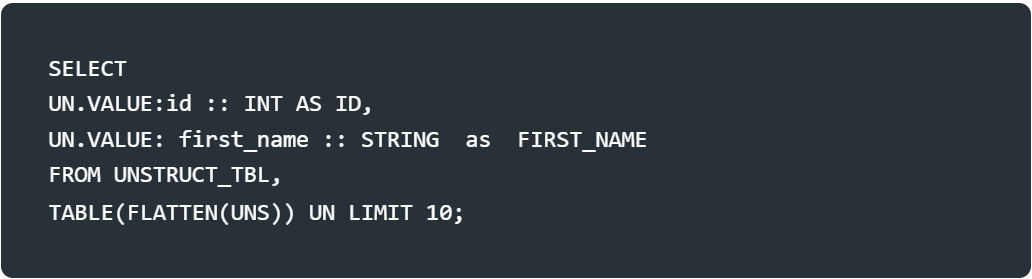

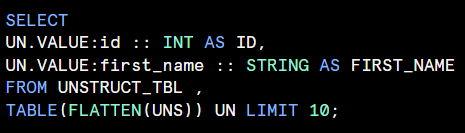

Parsing Arrays with FLATTEN

If the JSON contains arrays (nested fileds) use the FLATTEN function

Step 7: Load JSON into a Structured Table

To make the data more query-friendly, let’s load it into a structured table with defined columns.

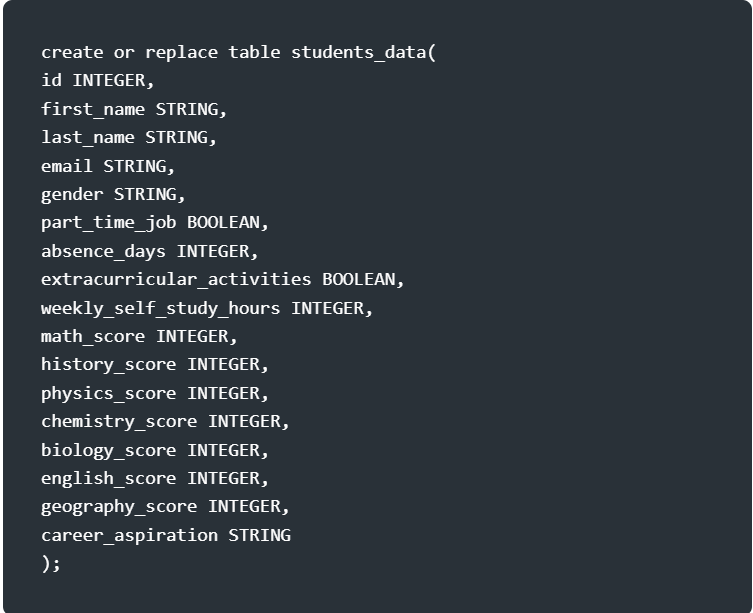

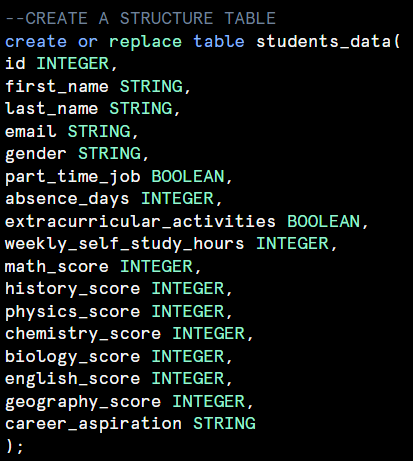

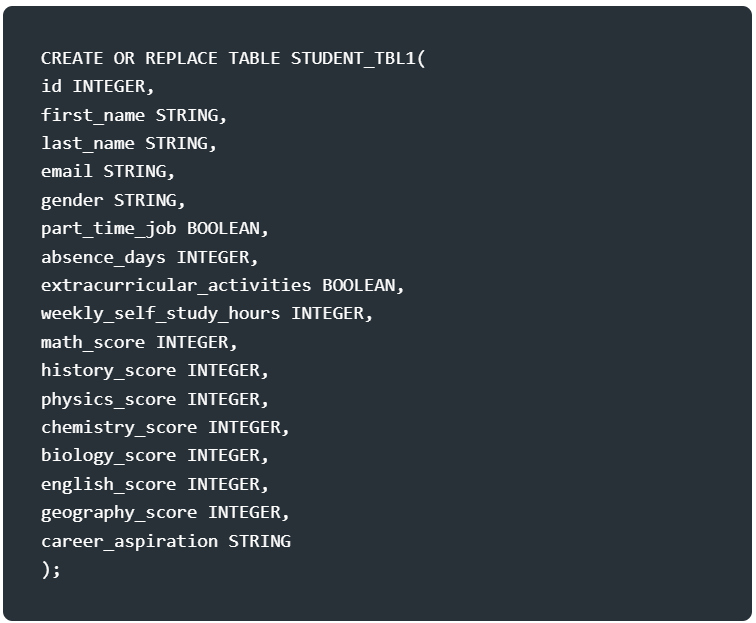

Create a Structured Table

Define a table matching the JSON schema.

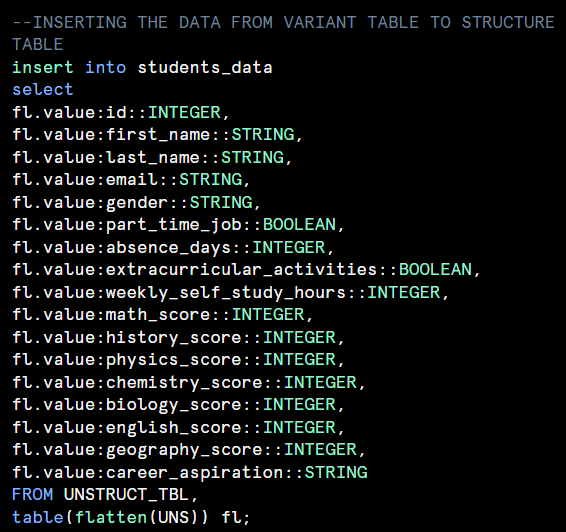

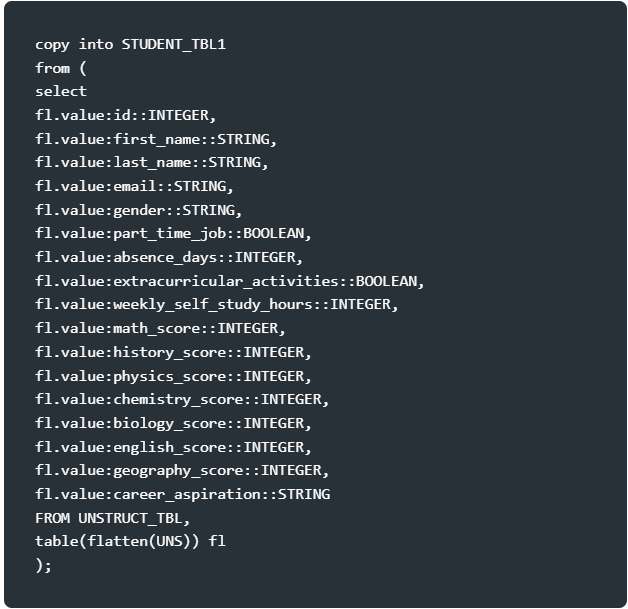

Insert Parsed JSON into the Structured Table

Extract fields from UNSTRUCT_TBL and insert them into STUDENTS_DATA.

Storage Note: Storing data in both UNSTRUCT_TBL and STUDENTS_DATA doubles storage costs. Consider dropping UNSTRUCT_TBL after loading if it’s no longer needed:

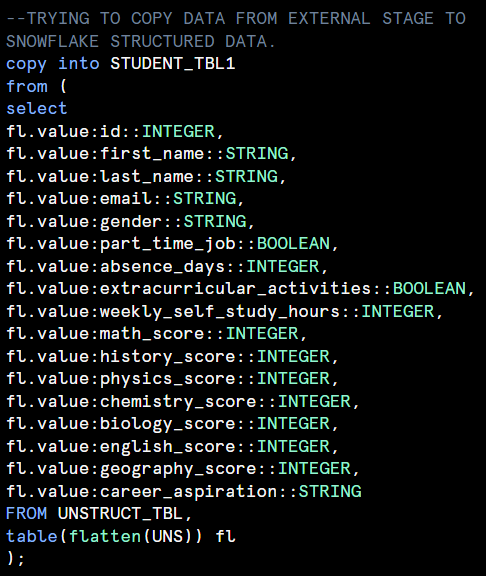

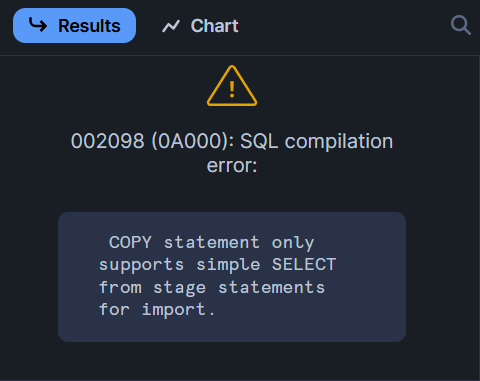

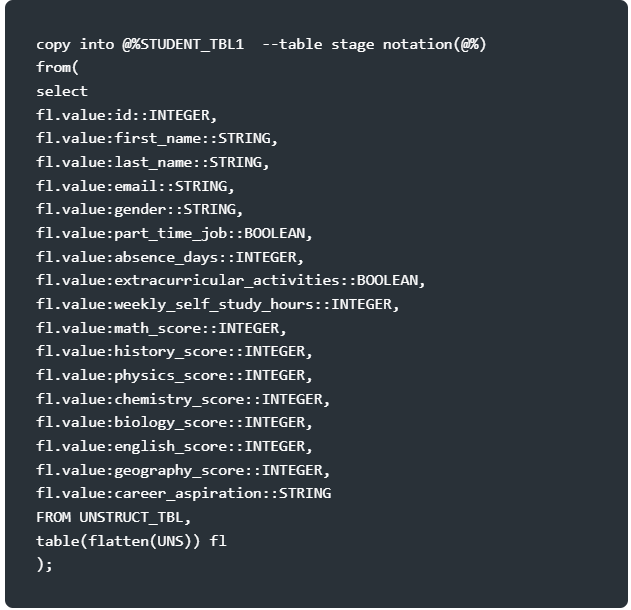

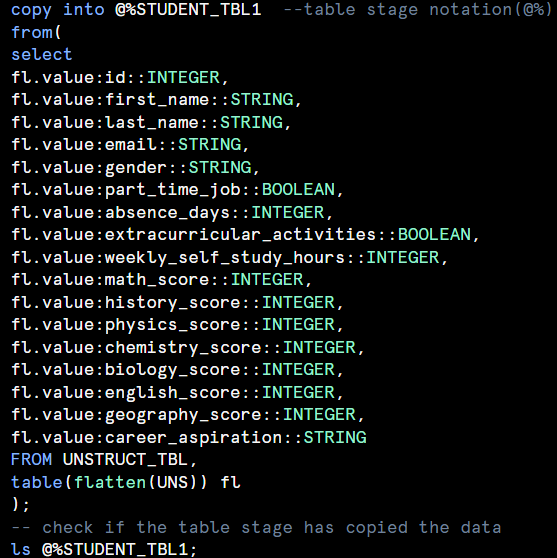

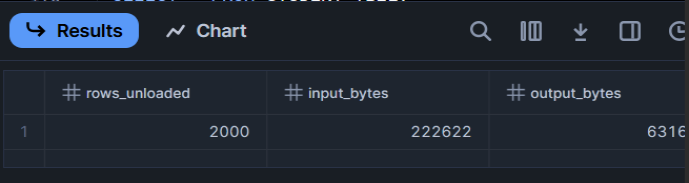

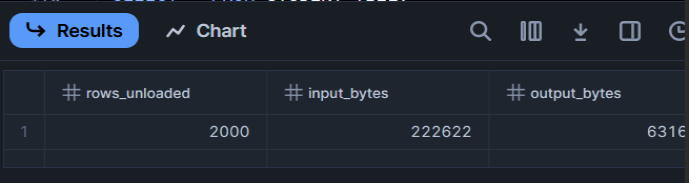

Step 8: Load Directly into a Structured Table

We can’t directly copy the JSON file from external stage to snowflake Sturcutre table. Start with creating another table to load the same file from S3 blog.

Conclusion

You have just taken a big step into the world of Snowflake by learning how to pull JSON data from an AWS S3 bucket and transform it into structured tables. From setting up your S3 connection to parsing JSON, you now have the tools to turn unstructured data into actionable insights. Once your data is loaded, you can leverage Snowflake Cortex AI functions to analyze text data, or build dbt transformation pipelines to model and clean your data. Try it with your own JSON files, and consider using Snowpipe for automation -- see our guide on connecting GCP to Snowflake with Snowpipe for an example.

Frequently Asked Questions

Q: What is unstructured data in Snowflake?

Unstructured data in Snowflake refers to data that does not follow a predefined schema or format, such as JSON files, images, PDFs, and audio files. Snowflake supports storing and processing unstructured data using internal and external stages.

Q: How does Snowflake handle unstructured data?

Snowflake handles unstructured data through stages (internal and external), directory tables for metadata management, and functions like GET_PRESIGNED_URL for secure access. It can also process unstructured data using Snowpark and Snowflake Cortex AI functions.

Q: Can Snowflake process JSON data from AWS S3?

Yes. Snowflake can connect to AWS S3 buckets using external stages, load JSON files, and parse them into structured tables using the COPY INTO command with appropriate file format settings. You can also use FLATTEN to handle nested JSON structures.

Still have questions?

Get AssistanceReady? Let's Talk!

Get expert insights and answers tailored to your business requirements and transformation.

Get Assistance