Performance Tuning in Talend: Optimizing ETL Jobs

Last updated: July 2024

Quick answer: To optimize Talend ETL job performance, push filtering and joins to the database using ELT components (tELTMySQLMap), replace tMap lookups with database joins, use tDBOutputBulk + tDBBulkExec for bulk loading instead of row-by-row tDBOutput, increase JVM heap memory via -Xmx settings, enable parallel execution with tParallelize, and process large datasets in batches.

Introduction

Talend performance tuning is essential when ETL jobs process large datasets. A Talend job that runs smoothly with 10,000 rows can struggle with millions of records. Performance tuning in Talend means identifying bottlenecks -- slow lookups, excessive memory usage, row-by-row processing -- and applying proven optimization techniques to make your Talend ETL jobs faster and more resource-efficient.

This guide covers the most effective Talend performance tuning techniques: database-side processing, bulk loading, memory management, parallel execution, and batch processing. For detailed bulk loading strategies, see our comparison of tDBOutput, tDBOutputBulk, and tDBBulkExec.

Common Bottlenecks in Talend Jobs

Bottlenecks are the parts of your ETL job that slow everything down. Some common ones are:

- Extracting too much data from databases.

- Performing heavy transformations in Talend instead of the database.

- Large lookups consuming too much memory.

Here are some proven techniques to optimize your ETL jobs:

1.Use database-side processing

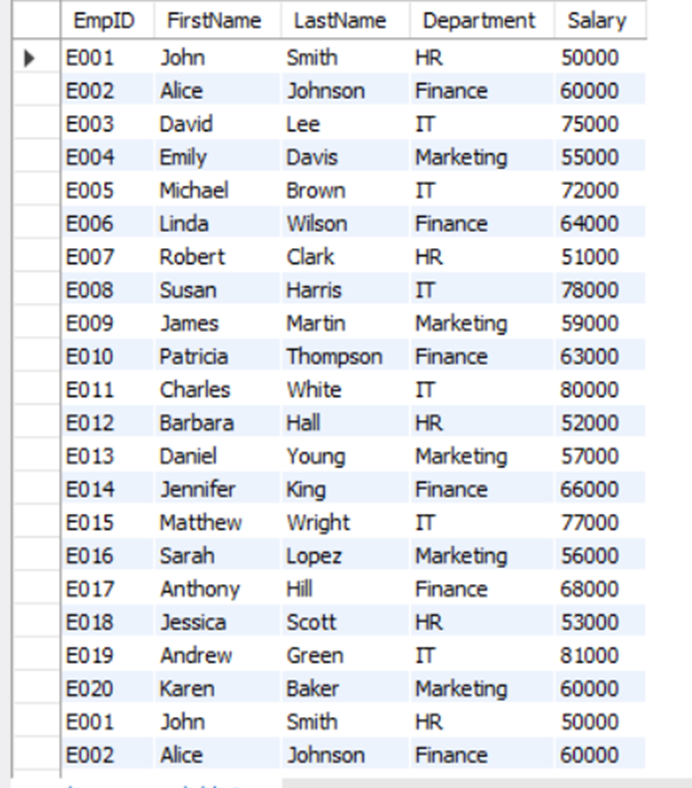

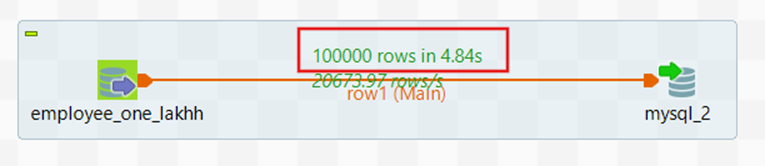

Let the database handle filtering, joins, and aggregations instead of doing everything in Talend. Let us take input from the employee table in the database. Here is a sample of the employee data:

Let's take a simple job for understanding.

In Talend Studio, use the employee table as input and load it into a database.

It takes nearly 5 seconds to load the data.

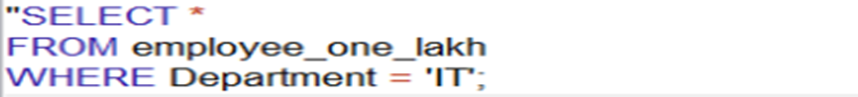

Filter the rows using where condition:

To filter the data, in the tDBInput component, write a SQL query with a WHERE clause. Instead of loading all rows into Talend for filtering, use SQL to fetch only what you need.

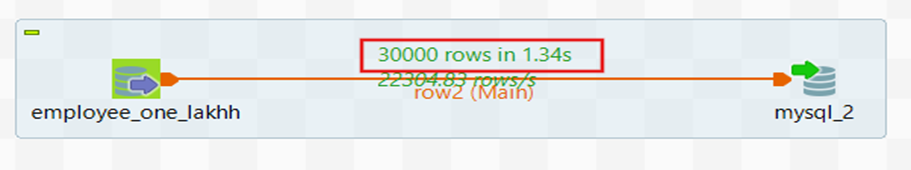

Now execute the job in Talend.

After applying the WHERE conditions, execution time reduces to 1.34 seconds, showing how much the performance has improved.

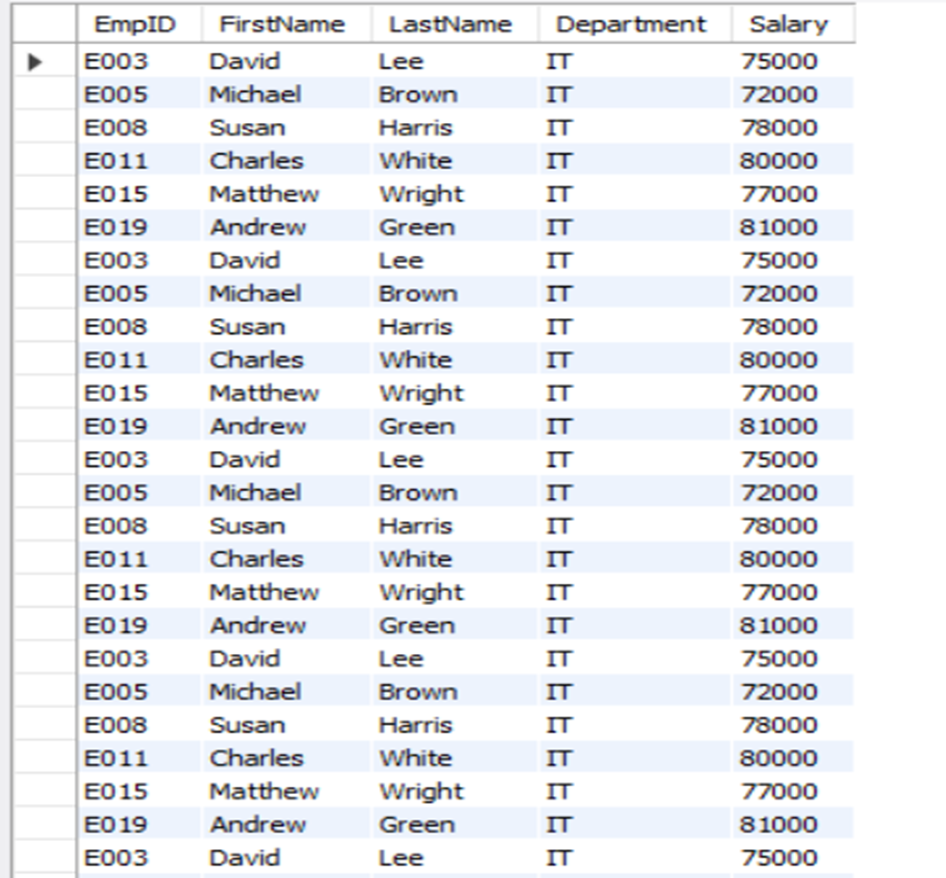

Here rows are filtered based on the query, the result is:

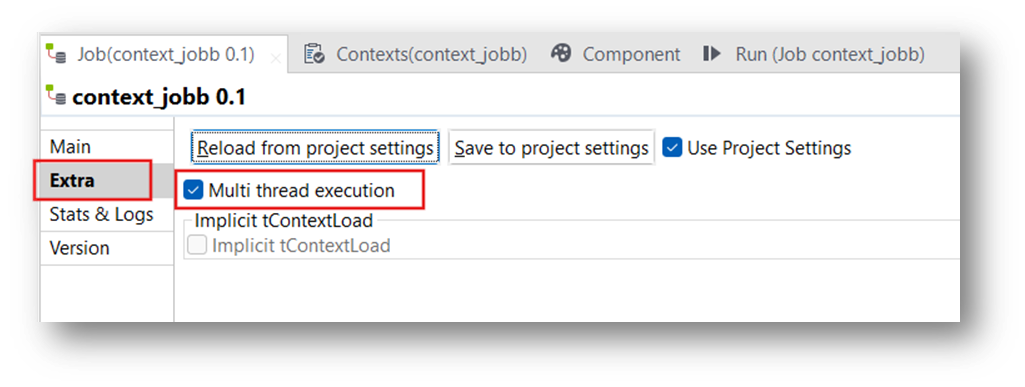

2. Use Parallelization

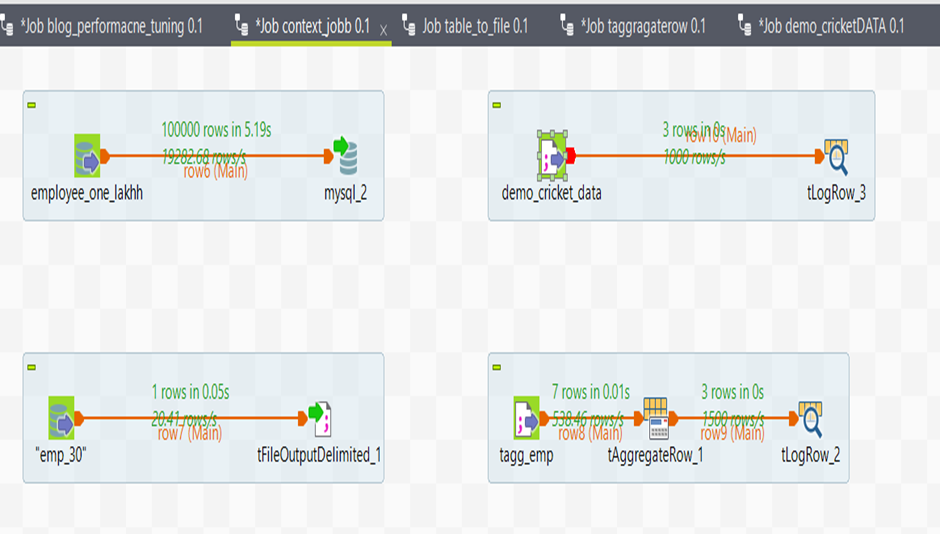

In the Talend workspace, if you have multiple sub-jobs, enable multi-thread execution in the job tab for independent sub-jobs.

Multi-thread execution is useful for jobs that can process data streams in parallel.

Now run the job.

Here, all jobs are executed in parallel.

3. Optimize Memory Management for Lookups

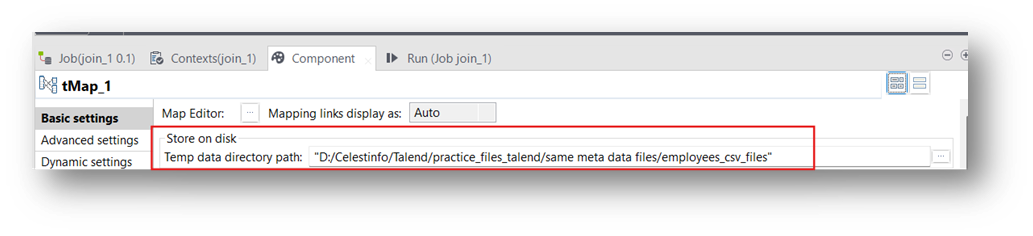

Large lookups in tMap can overload memory. Enable the “Store on disk” option and reduce unnecessary columns. In tMap, you can tick “Store temp data on disk”.

This means instead of holding the lookup dataset entirely in memory, Talend will save it to a local temp file on disk and read from it when needed.

Example in Talend

- Main flow = Customer orders (100,000 rows).

- Lookup flow = Customer details (2 million rows).

If you try to join in tMap without “Store on disk”, Talend loads all 2 million customer rows in memory and may crash. With “Store on disk”, Talend writes those 2 million rows to a temp file on disk and reads only the needed matches, so the job runs safely.

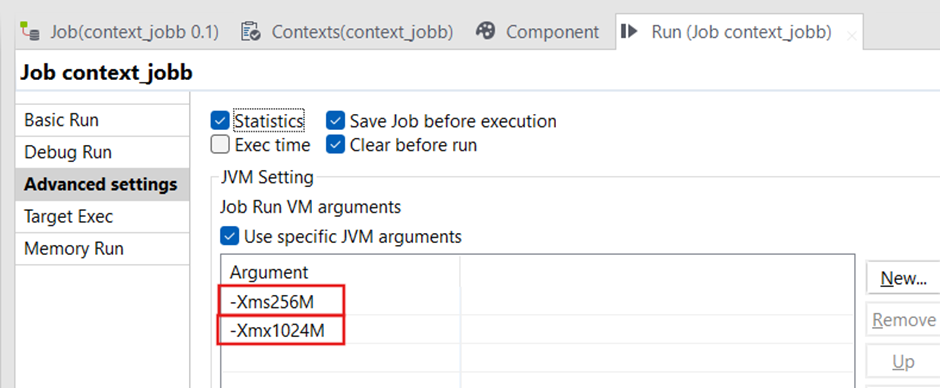

4. Increase JVM Memory Allocation

Open Your Job

In Talend Studio, open the job where you want to increase memory.

Open the Advanced Settings

- Inside the Run tab, click Advanced settings.

- Scroll down until you find JVM Settings.

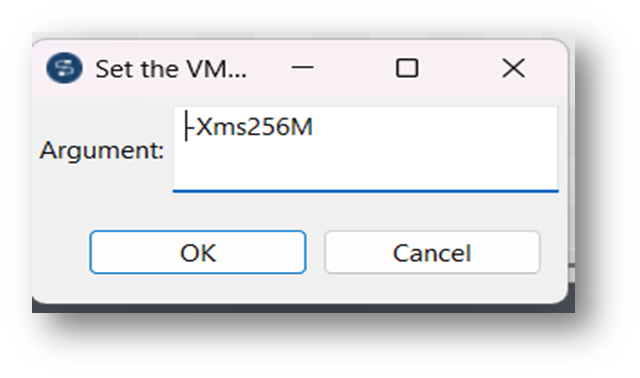

Set JVM Arguments (Heap Size)

In the JVM arguments box, add or modify the memory parameters:

- -Xms512m: Initial memory allocation (start heap size).

- -Xmx2048m: Maximum heap size (increase this as needed).

Example values you can try depending on your system RAM:

- Small jobs: -Xms512m -Xmx1024m

- Medium jobs: -Xms1024m -Xmx2048m

- Large jobs: -Xms2048m -Xmx4096m

This method increases memory only for this job’s run inside Studio.

Conclusion

Performance tuning in Talend is not about redesigning everything -- it is about making smart adjustments to eliminate bottlenecks. If you are experiencing memory-related slowdowns, see our dedicated guide on solving heap memory issues in Talend. By pushing work to the database, handling lookups efficiently, using bulk operations, and managing resources wisely, you can make your Talend jobs faster, scalable, and production-ready. For a broader perspective on optimizing compute workloads, read our article on managing compute workloads for ETL vs analytics.

Frequently Asked Questions

Q: What is performance tuning in Talend?

Performance tuning in Talend means improving the speed, efficiency, and resource usage of your ETL jobs by identifying slow parts (bottlenecks) and optimizing them. Techniques include pushing processing to the database, using parallelization, optimizing memory management for lookups, and increasing JVM memory allocation.

Q: How do I use parallelization in Talend to speed up jobs?

Enable multi-thread execution in the job tab for independent sub-jobs. This allows multiple sub-jobs to run simultaneously, which is especially useful for jobs that can process data streams in parallel.

Q: How do I increase JVM memory for a Talend job?

In Talend Studio, open the job and go to the Run tab, then click Advanced Settings. Scroll to JVM Settings and add or modify the memory parameters: -Xms512m for initial heap size and -Xmx2048m for maximum heap size. Adjust values based on your system RAM and job requirements.

Related Articles

Still have questions?

Get AssistanceReady? Let's Talk!

Get expert insights and answers tailored to your business requirements and transformation.

Get Assistance