Solving Heap Memory Issues in Talend: A Complete Guide

Last updated: August 2024

Quick answer: Fix Talend Java Heap Space errors by increasing JVM memory allocation: go to Run > Advanced Settings and set -Xms256m -Xmx1024m (or higher based on data volume). Also replace in-memory tMap lookups with database joins, use tFileOutputDelimited for staging instead of holding data in memory, process data in batches, and use tDBOutputBulk for bulk loading.

Talend jobs run on top of Java. The heap memory is the portion of RAM allocated to the JVM to store objects created during job execution.

Introduction

The Java Heap Space error is the most frequent memory issue in Talend, occurring when the JVM runs out of heap memory during job execution. Talend heap memory issues affect jobs processing large datasets, complex lookups, or multiple data flows. Heap memory problems often go hand-in-hand with Null Pointer Exceptions in Talend, so fixing both is essential for stable jobs. This guide covers the root causes of heap memory errors in Talend and provides practical solutions including JVM tuning, component optimization, and architectural best practices for data-intensive Talend jobs.

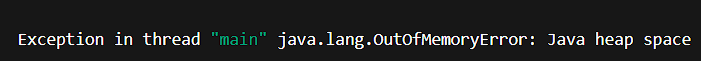

When working with large datasets or complex jobs in Talend, one of the most common errors developers encounter is the dreaded Java Heap Space error. It often appears as:

This error signals that Talend’s underlying Java Virtual Machine (JVM) has run out of memory. Left unresolved, it can cause jobs to fail, slow down, or even crash.

In this blog, let’s break down why heap memory issues occur in Talend, and how you can prevent and fix them effectively.

Common Causes of Heap Memory Issues in Talend

- Processing huge files or datasets entirely in memory.

- Improper component usage, for example tMap holding millions of rows without filtering.

- Joins on large datasets using tMap instead of database-side joins.

- Insufficient JVM memory allocation (default heap size too small).

- Memory leaks caused by not releasing unused objects.

Best Practices to Fix Heap Memory Issues

1.Increase JVM Memory Allocation

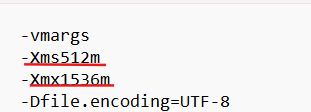

- Go to Talend Studio and locate the Talend.ini file (in your Talend installation folder).

- If you want to permanently increase Talend Studio heap memory, you must edit the Talend-Studio.ini file (for example, TOS_BD-win-x86_64.ini) in the Talend installation folder. The file name may vary from user to user.

- Increase the -Xms (initial heap size) and -Xmx (maximum heap size).

Here, heap memory starts at 512MB and can grow up to 1.5GB. Edit the values based on your system’s RAM. The values given for Xms should be lesser than Xmx. For job execution in Talend JobServer or Talend Administration Center (TAC), increase memory in the JVM parameters of the execution task.

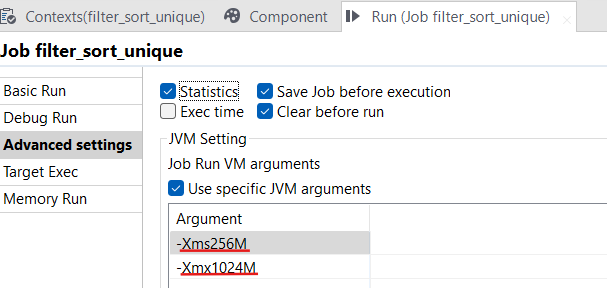

2.Increase JVM Memory Allocation (Alternative Method)

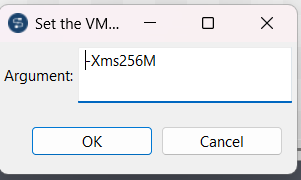

Open Your Job

In Talend Studio, open the job for which you want to increase memory.

Go to the Run View

- At the bottom panel, click on the Run tab.

- You will see sub-tabs like Run(Job), Component, Context.

- Click the Run tab.

Open the Advanced Settings

- Inside the Run tab, click Advanced settings.

- Scroll down until you find JVM Settings.

Set JVM Arguments (Heap Size)

In the JVM arguments box, add or modify the memory parameters:

- -Xms512m: Initial memory allocation (start heap size).

- -Xmx2048m: Maximum heap size (increase this as needed).

Values you can try depending on your system RAM:

- Small jobs: -Xms512m -Xmx1024m

- Medium jobs: -Xms1024m -Xmx2048m

- Large jobs: -Xms2048m -Xmx4096m

This method increases memory only for this particular job’s run inside Studio.

3. Optimize Job Design

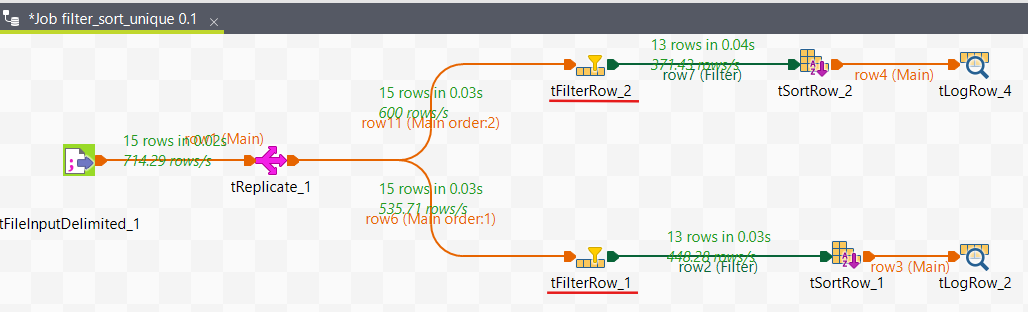

- Filter early: Use tFilterRow or conditions in tInput components to reduce data volume.

- Push down transformations: Perform heavy joins and aggregations directly in the database using tELT components.

- Use streaming: Instead of loading everything into memory, process records row by row.

In this image, we use tFilterRow components to reduce data volume.

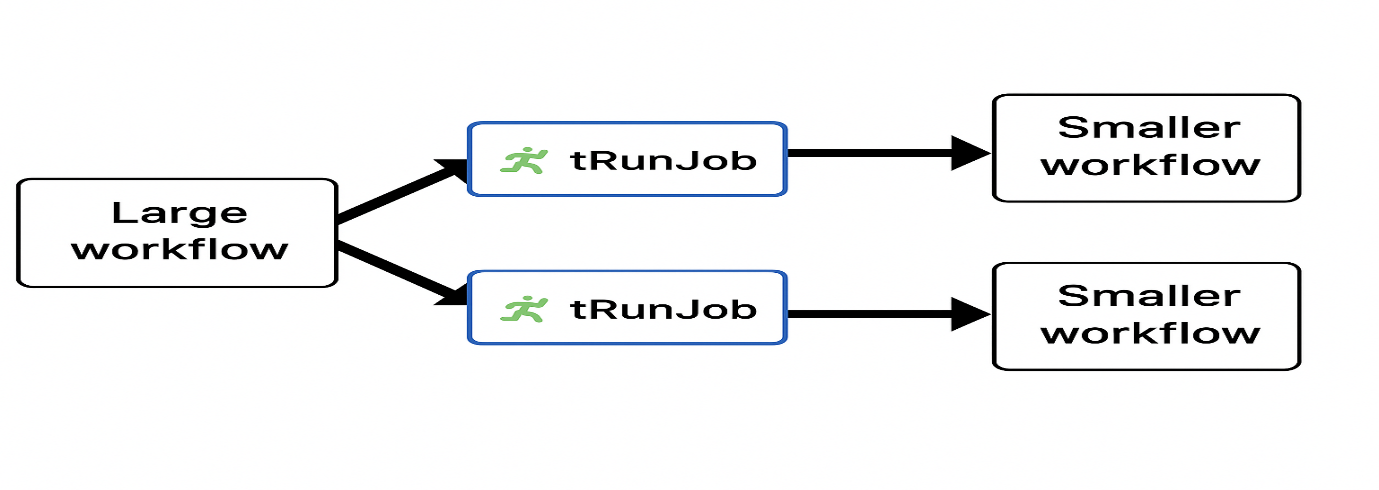

4. Break Down Large Jobs

- Split large workflows into smaller subjobs using tRunJob.

- Write intermediate data to temporary files or staging tables.

- This reduces memory load on a single job.

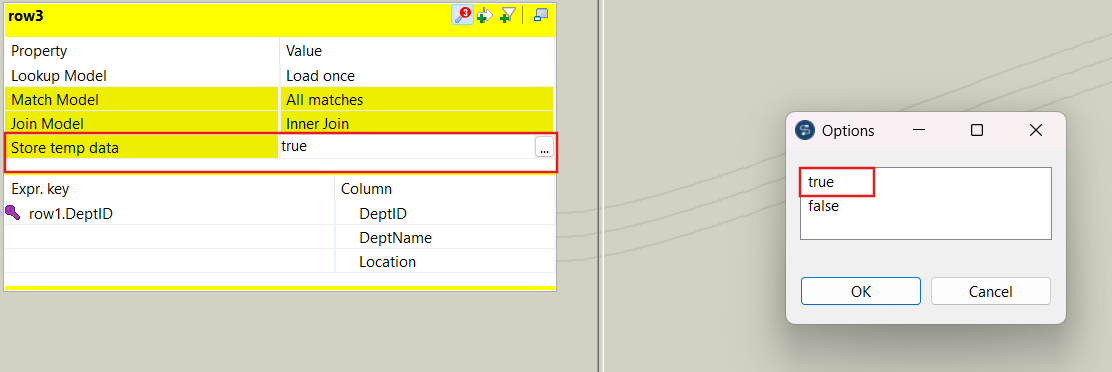

5. Use the Right Component Settings

In tMap, enable the “Store temp data” option for large lookups (saves data on disk instead of RAM).

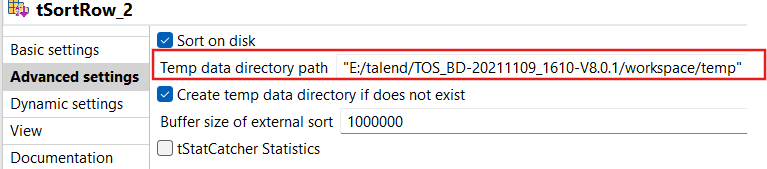

Use tSortRow with external sorting instead of in-memory sorting.

Real-World Example

A Talend job was failing with a heap memory error while processing a 2 GB CSV file. After investigation:

- Default heap (512 MB - 1536 MB) was too small.

- The job used tMap to join two full datasets in memory.

Fix:

1. Increased heap size to -Xmx1536m to -Xmx4096m.

2. Added filtering in the input stage to reduce row volume.

Result

The job completed successfully in under a few minutes without heap memory issues.

Conclusion

Heap memory errors in Talend are common, but they are not unfixable. By combining the right configuration (increasing JVM memory) with job design best practices (filtering, modularization, database pushdown), you can build ETL jobs that are both efficient and stable. For more optimization techniques, see our comprehensive Talend performance tuning guide and our article on choosing between tDBOutput and bulk loading components.

Frequently Asked Questions

Q: What causes Java heap space errors in Talend?

Java heap space errors in Talend occur when the JVM runs out of allocated memory. Common causes include processing huge files or datasets entirely in memory, improper component usage such as tMap holding millions of rows without filtering, joins on large datasets using tMap instead of database-side joins, insufficient JVM memory allocation, and memory leaks caused by not releasing unused objects.

Q: How do I increase heap memory in Talend?

You can increase heap memory in Talend by editing the Talend-Studio.ini file in the Talend installation folder and modifying the -Xms (initial heap size) and -Xmx (maximum heap size) values. Alternatively, for individual jobs, go to the Run tab, click Advanced Settings, scroll to JVM Settings, and set the -Xms and -Xmx parameters.

Q: What are best practices to avoid heap memory issues in Talend?

Best practices include filtering data early using tFilterRow, pushing heavy joins and aggregations to the database using tELT components, processing records row by row instead of loading everything into memory, breaking large workflows into smaller subjobs using tRunJob, and enabling the "Store temp data" option in tMap for large lookups.

Related Articles

Still have questions?

Get AssistanceReady? Let's Talk!

Get expert insights and answers tailored to your business requirements and transformation.

Get Assistance